On 13 November 2025, Anthropic, a company that, in its own words, builds AI agents to “study their safety properties at the technological frontier”, released a report claiming it had disrupted a major nation-state cyber-espionage operation that co-opted its services to execute the attack. The full report is fascinating, and you should read it because it shows how AI is already changing how knowledge workers operate. The headline claim is that a group Anthropic believes is Chinese state-sponsored co-opted Claude to run a cyber campaign at scale, with far fewer personnel than would be possible without modern AI tooling.

So far, so cyberpunk dystopia. But beyond the ever-creeping William Gibson-esque future we’re barrelling towards, it points towards how organisations will use AI agents to gain a comparative advantage. Critics have argued Anthropic is overhyping this to promote its latest models. This wasn’t an AI vibecoding zero-day exploits or fully automating a successful hack. In some ways it’s almost prosaic. I think that criticism misses what this event reveals.

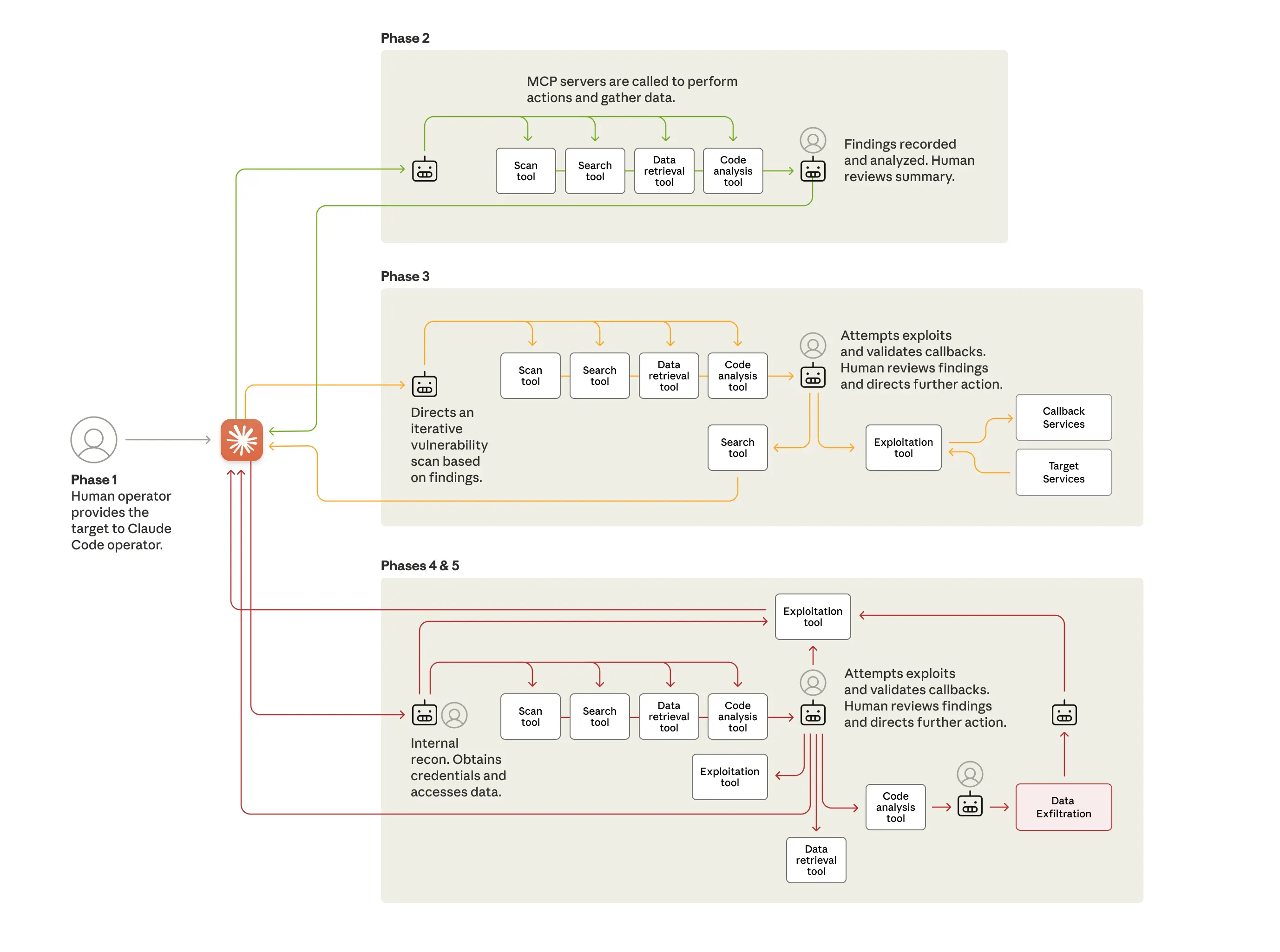

The part that made me pay attention was this diagram.

In my day job in cloud engineering and architecture I see a lot of diagrams like this. A bunch of arrows pointing at boxes. What this diagram is showing is how you can use AI agents like Claude to orchestrate sophisticated knowledge work. To be clear there isn’t much in this diagram that is new or revolutionary from a technology perspective. What these attackers have done is glued a bunch of existing well known tools together and used AI to scale their use.

So are the critics right? Is none of this groundbreaking?

I don’t think so. The boring truth about innovation is that it usually looks something like this. Taking a technique or tool that already exists and applying it to a new field or purpose. This is innovation in cyberwarfare. In a traditional cyber attack you’d have a handful of senior engineers directing junior colleagues through the grunt work while the seniors track the objective and steer the campaign. Here, the juniors aren’t needed. But this isn’t without challenges. Anthropic found the AI agents hallucinated and reported they were successfully breaching targets when they hadn’t done so, they found that the people operating the AI agents had to work hard to keep them on task or stop them from spending far too long on solutions that were obviously wrong.

All of these besides (hopefully) the hallucinations show up in a traditional team too. Anyone who’s mentored junior engineers knows the pattern. They need clear instructions, they need help when they get stuck, and their work needs careful review. That’s no different when instead of a Junior engineer scanning a network you have an AI agent doing it.

The difference is scaling. Scaling humans means hiring, training, supervision, and management overhead. Scaling AI agents mostly means telling your boss about how you’re going to use AI to speed up your work and that it’s going to cost a bit more in software licences. This is a much easier sell. An AI agent isn’t going to get sick or sleep in after having one too many the night before.

And this innovation isn’t limited to state-sponsored hackers, or even to software engineering. This is coming to an office function near you. There are some indications we’re already seeing the effect on the job market as entry level positions are harder to justify when you could just hire another experienced worker and give them the best AI tools you can afford.

On the other hand, despite the daily headlines claiming entire industries are about to be replaced with ChatGPT, these models aren’t autonomous enough to handle complex work unsupervised. What they are is good enough to do skilled work with oversight. The automation wave that washed over manufacturing is heading towards office work, and it will change the nature of work for people who spend most of their days in front of a computer.

How it changes our economy is uncertain. It might drive a spike in unemployment. Or it might free people from administrative drudgery and let them focus on the parts of their job that are most rewarding and productive.

The uncomfortable question for organisations trying to navigate this new world is what comes next. How do you replace the experienced workers you need to direct your shiny new AI agents if the low-risk work that used to train the next generation disappears first?